[ad_1]

Researchers used ChatGPT to diagnose eye-related complaints and located it carried out effectively.

Richard Drew/AP

disguise caption

toggle caption

Richard Drew/AP

Researchers used ChatGPT to diagnose eye-related complaints and located it carried out effectively.

Richard Drew/AP

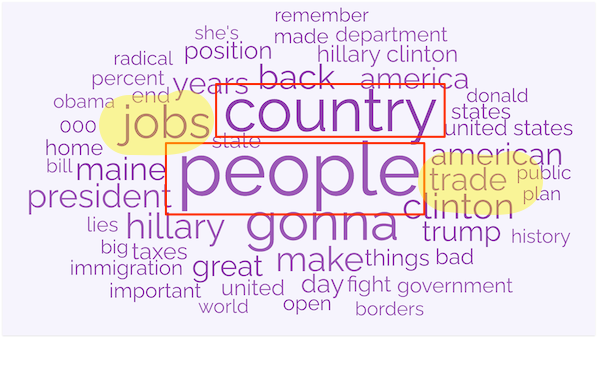

As a fourth-year ophthalmology resident at Emory College College of Drugs, Riley Lyons’ greatest duties embrace triage: When a affected person is available in with an eye-related criticism, Lyons should make an instantaneous evaluation of its urgency.

He typically finds sufferers have already turned to “Dr. Google.” On-line, Lyons stated, they’re more likely to discover that “any variety of horrible issues could possibly be occurring primarily based on the signs that they are experiencing.”

So, when two of Lyons’ fellow ophthalmologists at Emory got here to him and steered evaluating the accuracy of the AI chatbot ChatGPT in diagnosing eye-related complaints, he jumped on the likelihood.

In June, Lyons and his colleagues reported in medRxiv, a web based writer of well being science preprints, that ChatGPT in contrast fairly effectively to human medical doctors who reviewed the identical signs — and carried out vastly higher than the symptom checker on the favored well being web site WebMD.

And regardless of the much-publicized “hallucination” drawback recognized to afflict ChatGPT — its behavior of sometimes making outright false statements — the Emory examine reported that the newest model of ChatGPT made zero “grossly inaccurate” statements when introduced with a normal set of eye complaints.

The relative proficiency of ChatGPT, which debuted in November 2022, was a shock to Lyons and his co-authors. The factitious intelligence engine “is certainly an enchancment over simply placing one thing right into a Google search bar and seeing what you discover,” stated co-author Nieraj Jain, an assistant professor on the Emory Eye Heart who focuses on vitreoretinal surgical procedure and illness.

Filling in gaps in care with AI

However the findings underscore a problem dealing with the well being care business because it assesses the promise and pitfalls of generative AI, the kind of synthetic intelligence utilized by ChatGPT.

The accuracy of chatbot-delivered medical info could characterize an enchancment over Dr. Google, however there are nonetheless many questions on learn how to combine this new know-how into well being care methods with the identical safeguards traditionally utilized to the introduction of latest medication or medical units.

The sleek syntax, authoritative tone, and dexterity of generative AI have drawn extraordinary consideration from all sectors of society, with some evaluating its future affect to that of the web itself. In well being care, corporations are working feverishly to implement generative AI in areas comparable to radiology and medical data.

In relation to shopper chatbots, although, there may be nonetheless warning, despite the fact that the know-how is already extensively out there — and higher than many alternate options. Many medical doctors imagine AI-based medical instruments ought to endure an approval course of much like the FDA’s regime for medication, however that might be years away. It is unclear how such a regime may apply to general-purpose AIs like ChatGPT.

“There is no query we now have points with entry to care, and whether or not or not it’s a good suggestion to deploy ChatGPT to cowl the holes or fill the gaps in entry, it may occur and it is taking place already,” stated Jain. “Folks have already found its utility. So, we have to perceive the potential benefits and the pitfalls.”

Bots with good bedside method

The Emory examine is just not alone in ratifying the relative accuracy of the brand new technology of AI chatbots. A report revealed in Nature in early July by a gaggle led by Google laptop scientists stated solutions generated by Med-PaLM, an AI chatbot the corporate constructed particularly for medical use, “evaluate favorably with solutions given by clinicians.”

AI may have higher bedside method. One other examine, revealed in April by researchers from the College of California-San Diego and different establishments, even famous that well being care professionals rated ChatGPT solutions as extra empathetic than responses from human medical doctors.

Certainly, plenty of corporations are exploring how chatbots could possibly be used for psychological well being remedy, and a few buyers within the corporations are betting that wholesome folks may also take pleasure in chatting and even bonding with an AI “pal.” The corporate behind Replika, one of the crucial superior of that style, markets its chatbot as, “The AI companion who cares. At all times right here to pay attention and discuss. At all times in your facet.”

“We’d like physicians to start out realizing that these new instruments are right here to remain and so they’re providing new capabilities each to physicians and sufferers,” stated James Benoit, an AI guide.

Whereas a postdoctoral fellow in nursing on the College of Alberta in Canada, Benoit revealed a examine in February reporting that ChatGPT considerably outperformed on-line symptom checkers in evaluating a set of medical situations. “They’re correct sufficient at this level to start out meriting some consideration,” he stated.

An invite to hassle

Nonetheless, even the researchers who’ve demonstrated ChatGPT’s relative reliability are cautious about recommending that sufferers put their full belief within the present state of AI. For a lot of medical professionals, AI chatbots are an invite to hassle: They cite a number of points regarding privateness, security, bias, legal responsibility, transparency, and the present absence of regulatory oversight.

The proposition that AI must be embraced as a result of it represents a marginal enchancment over Dr. Google is unconvincing, these critics say.

“That is somewhat little bit of a disappointing bar to set, is not it?” stated Mason Marks, a professor and MD who focuses on well being regulation at Florida State College. He just lately wrote an opinion piece on AI chatbots and privateness within the Journal of the American Medical Affiliation.

“I do not understand how useful it’s to say, ‘Effectively, let’s simply throw this conversational AI on as a band-aid to make up for these deeper systemic points,'” he stated to KFF Well being Information.

The most important hazard, in his view, is the chance that market incentives will end in AI interfaces designed to steer sufferers to specific medication or medical providers. “Firms may need to push a specific product over one other,” stated Marks. “The potential for exploitation of individuals and the commercialization of knowledge is unprecedented.”

OpenAI, the corporate that developed ChatGPT, additionally urged warning.

“OpenAI’s fashions are usually not fine-tuned to offer medical info,” an organization spokesperson stated. “You must by no means use our fashions to offer diagnostic or therapy providers for critical medical circumstances.”

John Ayers, a computational epidemiologist who was the lead creator of the UCSD examine, stated that as with different medical interventions, the main focus must be on affected person outcomes.

“If regulators got here out and stated that if you wish to present affected person providers utilizing a chatbot, you need to show that chatbots enhance affected person outcomes, then randomized managed trials can be registered tomorrow for a number of outcomes,” Ayers stated.

He want to see a extra pressing stance from regulators.

“100 million folks have ChatGPT on their cellphone,” stated Ayers, “and are asking questions proper now. Individuals are going to make use of chatbots with or with out us.”

At current, although, there are few indicators that rigorous testing of AIs for security and effectiveness is imminent. In Might, Robert Califf, the commissioner of the FDA, described “the regulation of huge language fashions as essential to our future,” however other than recommending that regulators be “nimble” of their method, he provided few particulars.

Within the meantime, the race is on. In July, The Wall Road Journal reported that the Mayo Clinic was partnering with Google to combine the Med-PaLM 2 chatbot into its system. In June, WebMD introduced it was partnering with a Pasadena, California-based startup, HIA Applied sciences Inc., to offer interactive “digital well being assistants.”

And the continuing integration of AI into each Microsoft’s Bing and Google Search means that Dr. Google is already effectively on its approach to being changed by Dr. Chatbot.

This text was produced by KFF Well being Information, which publishes California Healthline, an editorially impartial service of the California Well being Care Basis.

[ad_2]

Source link

:max_bytes(150000):strip_icc()/Health-GettyImages-StrongGlutes-d834d403c3824ecc947fd2e1272beedc.jpg)