[ad_1]

Forcing AI for everybody: Google has been rolling out its AI Overviews to its US customers over the past a number of days. Whereas the corporate claims that the AI summaries that seem on the prime of the outcomes are principally appropriate and fact-based, an alarming variety of customers have encountered so-called hallucinations – when an LLM states a falsehood as truth. Customers aren’t impressed.

In my early testing of the experimental function, I discovered the blurbs extra obnoxious than useful. They seem on the prime of the outcomes web page, so I have to scroll right down to get to the fabric I would like. They’re often incorrect within the finer particulars and sometimes plagiarize an article phrase for phrase. These annoyances prompted me to write down final week’s article explaining a number of methods to bypass the intrusive function now that Google is shoving it down our throats with no off change.

Now that AI Overviews has had a number of days to percolate within the public, customers are discovering many examples the place the function fails. Social media is flooded with humorous and apparent examples of Google’s AI attempting too onerous. Remember the fact that individuals are inclined to shout when issues go mistaken and stay silent after they work as marketed.

“The examples we have seen are usually very unusual queries and are not consultant of most individuals’s experiences,” a Google spokesperson advised Ars Technica. “The overwhelming majority of AI Overviews present prime quality info, with hyperlinks to dig deeper on the net.”

Whereas it could be true that most individuals have good summaries, what number of unhealthy ones are allowed earlier than they’re thought of untrustworthy? In an period the place everyone seems to be screaming about misinformation, together with Google, it will appear that the corporate would care extra in regards to the unhealthy examples than patting itself on the again over the nice ones – particularly when its Overviews are telling people who operating with scissors is sweet cardio.

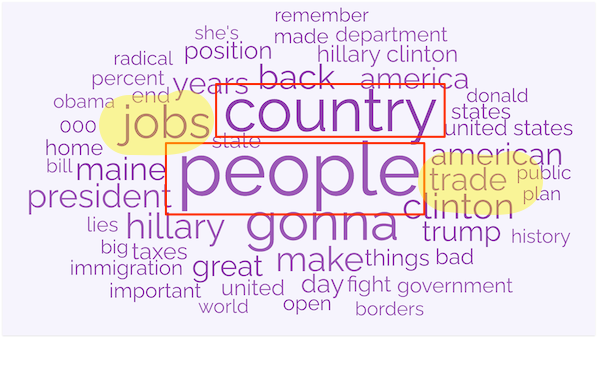

Synthetic intelligence entrepreneur Kyle Balmer highlights some funnier examples in a fast X video (under).

Google AI overview is a nightmare. Appears like they rushed it out the door. Now the web is having a discipline day. Heres are among the finest examples https://t.co/ie2whhQdPi

– Kyle Balmer (@iamkylebalmer) May 24, 2024

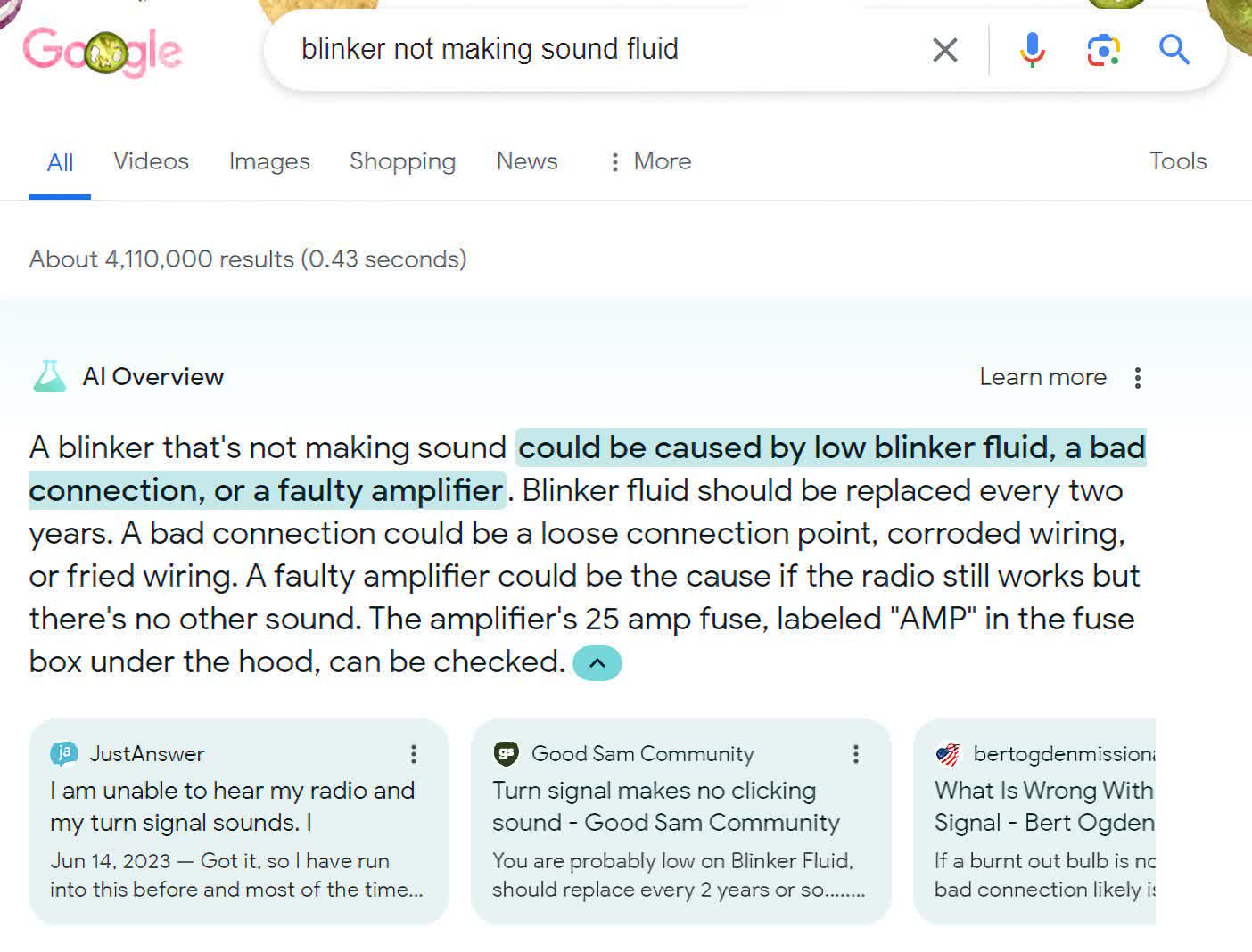

You will need to notice that a few of these responses are deliberately adversarial. For instance, on this one posted by Ars Technica the phrase “fluid” has no enterprise being within the search apart from to reference the previous troll/joke, “you must change your blinker fluid.”

The joke has existed since I used to be in highschool store class, however in its try to supply a solution that encompasses all the search phrases, Google’s AI picked up the thought from a troll on the Good Sam Group Discussion board.

How about itemizing some actresses who’re of their 50s?

google’s AI is so good, it is truthfully scary pic.twitter.com/7aa68Vo3dw

– Joe Kwaczala (@joekjoek) May 19, 2024

Whereas .250 is an okay batting common, one out of 4 doesn’t make an correct listing. Additionally, I guess Elon Musk can be stunned to seek out out that he graduated from the College of California, Berkley. In line with Encyclopedia Britanica, he truly recieved two levels from the College of Pennsylvania. The closest he acquired to Berkley was two days a Stanford earlier than dropping out.

The identical type of error is sadly trivial to provide, eg, tech ceos who went to Berkeley. pic.twitter.com/mDVXT714C7

– MMitchell (@mmitchell_ai) May 22, 2024

Blatantly apparent errors or solutions, like mixing glue together with your pizza sauce to maintain your cheese from falling off, won’t probably trigger anyone hurt. Nonetheless, when you want critical and correct solutions, even one mistaken abstract is sufficient to make this function untrustworthy. And if you cannot belief it and should fact-check it by wanting on the common search outcomes, then why is it above all the things else saying, “Take note of me?”

A part of the issue is what AI Overviews considers a reliable supply. Whereas Reddit could be a superb place for a human to seek out solutions to a query, it isn’t so good for an AI that may’t distinguish between truth, fan fiction, and satire. So when it sees somebody insensitively and glibly saying that “leaping off the Golden Gate Bridge” can treatment somebody of their despair, the AI cannot perceive that the poster was trolling.

One other a part of the issue is that Google is speeding out Overviews in a panic to compete with OpenAI. There are higher methods to try this than by sullying its repute because the chief in search engines like google by forcing customers to wade via nonsense they did not ask for. On the very least, it needs to be an optionally available function, if not a wholly separate product.

Fanatics, together with Google’s PR crew, say, “It is solely going to get higher with time.”

That could be, however I’ve used (learn: tolerated) the function since January, when it was nonetheless optionally available, and have seen little change within the high quality of its output. So, leaping on the bandwagon would not minimize it for me. Google is just too broadly used and trusted for that.

[ad_2]

Source link

/cdn.vox-cdn.com/uploads/chorus_asset/file/25524175/DSCF8101.jpg)